SILICON VALLEY - With the advances in post production and digital intermediate production, an increasing amount of content produced for television and theatrical film releases is produced entirely in a digital format. Therefore, being able to make content data accessible to multiple production application clients means substantially reduced time-to-completion for production projects. The problem is maintaining the necessary delivery quality.

This seemingly simple problem is difficult to achieve because it requires high-performance and reliable data delivery from magnetic disk storage to client workstations. The crux of the problem is that high definition (HD) compressed video data can have bandwidths of up to 185 MB/s, 2K digital film images are up to 330 MB/s and 4K digital film goes up to 1.2 GB/s. Data transfer in these ranges is a challenge to data storage systems because content delivery must be made with precisely-controlled timing from storage to the client application.

The fundamental problem with existing storage architectures is that the storage and delivery of digital content is tightly coupled. To deliver 1.2 GB/s, every segment of the data path - from storage through the network, to the end workstation adapter, and finally to the application receiving buffers - must meet the necessary quality of delivery requirement at the same 1.2 GB/s throughput. The weakest link is the storage system because storage systems are based on conventional disk drives with I/O performance closely related to the rotational speed of the disk platter. Disk drive-based storage often suffers severe performance degradation when multiple read/writes are requested from data blocks concurrently, resulting in rapid thrashing of the disk read/write actuators. In this case, performance can be reduced by as much as 90 percent.

For mission-critical production environments where both throughput and scalability are paramount, Storage Area Networks (SAN) are typically used. In a SAN environment, shared file systems must be installed to provide file-level metadata synchronization among client applications. With shared file systems, multiple client applications can access the same set of files without data corruption concurrently. However, the underlying storage performance limitation still remains. In fact, a shared storage environment enables multiple storage access at throughput levels many times that of a single client access. This situation further aggravates the storage access throughput bottleneck.

As production environments become increasingly more networked, network efficiency depends more and more on the availability of scalable and high-performance storage access solutions. Technology based on disk storage arrays simply can not continue to meet the demands in a truly shared network environment for high-quality media content. To solve this problem, several elements must be addressed.

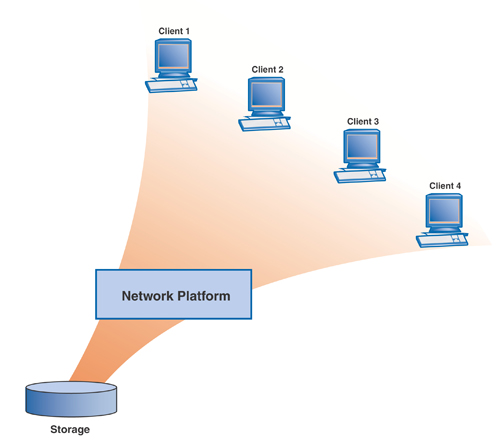

Decoupling storage from I/O means that the storage subsystem is no longer the primary system for delivering data to client workstations. Instead, a network platform can be used to route data from storage to the client.

The network platform can contain a large amount of dynamic RAM buffering, with up to one or two Terabytes. The network platform can also have a large number of processors closely coupled to the I/O ports. As a result, data movement from storage to the client is mediated by the network platform. With this large amount of buffering, data movement is no longer direct from storage to client. Instead, the network platform performs data retrieval from storage independently from the client content request pattern. This decoupling of storage from the I/O has immediate throughput benefits because performance is no longer constrained by the mechanical limitations of the disk drive itself.

Large amounts of dynamic cache frees the network platform to request data from storage so that data retrieval is optimized for sustained disk reads. For example, when two data streams are requested from the same disk storage, both at 1Gb/s, the network platform can retrieve 5GB of data from one stream at a 2Gb/s sustained rate before retrieving the other data stream at the same rate. The content is stored in the dynamic cache buffers first. Without using a network platform, the storage system must provide two 1Gb/s content streams with tightly interleaved delivery patterns, which substantially degrades storage system performance.

Further, to reduce or eliminate data fragmentation in storage, concurrent writes can be organized as a single write of large data blocks for one session. This procedure dramatically lowers fragmentation on underlying file systems and increases system performance.

In summary, one of the most significant benefits of a network platform is its dynamic caching. When multiple users or the same user requests repeated access to the same block of content data, the data can be delivered from the dynamic cache in the network platform without repeated retrievals from hard disk based storage. This process can improve performance substantially because content is delivered from the dynamic cache, which provides faster access than storage systems.

Creating a high-bandwidth network and storage solution that can give producers and editors independent and simultaneous access to high resolution content has been one of the key challenges in the media industry. The next generation of high-resolution content delivery systems must find new ways to address the demands for realtime wide bandwidth media content. The network platform presents a truly viable solution for today's requirements and for future applications.